Romania’s stolen elections were only the start: Inside the EU’s war on democracy

How Brussels’ Digital Services Act has been used to pressure platforms and electoral control in member states

RT | February 18, 2026

Romania’s 2024 presidential election was already one of the most controversial political episodes in the European Union in recent years. A candidate who won the first round was prevented from contesting the second. The vote was annulled. Claims of Russian interference were advanced without public evidence.

At the time, the affair raised urgent questions about democratic standards inside the EU. Newly disclosed documents reviewed by RT Investigations go further. They indicate that the annulment of the Romanian election was accompanied by sustained efforts to pressure social media platforms into suppressing political speech – efforts coordinated through mechanisms established under the EU’s Digital Services Act.

What appeared to be a national political crisis now looks increasingly like a test case for how far EU institutions are willing to go in intervening in the political processes of member states.

The Russian narrative. Again.

On February 3, the US House Judiciary Committee published a 160-page investigation into how the EU systematically pressures social media companies to alter internal guidelines and suppress content. It found Brussels orchestrated a “decade-long campaign” to censor political speech across the bloc. In many cases, this amounted to direct meddling in political processes and elections of members, often using EU-endorsed civil society organizations. The report features several case studies of this “campaign” in action in EU member states, the gravest example being Romania.

It was around the November 2024 Romanian presidential election, the committee found, that the European Commission“took its most aggressive censorship steps.” In the first round, anti-establishment outsider Calin Georgescu comfortably prevailed, and polls indicated he was en route to win the second by landslide. However, on December 6, Bucharest’s constitutional court overturned the results. While a court-ordered recount found no irregularities in the process, a new election was called, in which Georgescu was banned from running.

By contrast, Romania’s security service alleged Georgescu’s victory was attributable to a Russian-orchestrated TikTok campaign. The allegation was unsupported by any evidence whatsoever. Romanian President Klaus Iohannis went to the extent of claiming this deficit was inversely proof of Moscow’s culpability, as the Russians supposedly “hide perfectly in cyber space.” Despite the BBC reporting that even Romanians “who feared a president Georgescu” worried about the precedent set for their democracy by the move, that narrative has been endlessly reiterated ever since.

The US House Judiciary Committee report comprehensively disproves the charge of Russian meddling in the Romanian election. Documents and emails provided by TikTok expose how the platform not only consistently assessed Moscow “did not conduct a coordinated influence operation to boost Georgescu’s campaign,” but repeatedly shared these findings with the European Commission and Romanian authorities. This information was never shared by either party. But the contempt of Brussels and Bucharest for democracy and free speech went much further.

Digital Services Act in action

The committee found Romanian officials egregiously abused the EU’s controversial Digital Services Act before the 2024 election “to silence content supporting populist and nationalist candidates.” Bucharest also repeatedly lodged content takedown requests outside of the formal DSA process, using what committee investigators call “expansive interpretations of their own power to mandate removals of political content.” This amounted to a “global takedown order,” with authorities perversely arguing court demands to block certain content for local audiences were “mandatory not only in Romania.”

This was no doubt a ploy to prevent outsiders, in particular the country’s sizable diaspora, from accessing content featuring Georgescu. His “Romania First” agenda proved quite popular with emigres, numbering many millions due to mass depopulation since 1989. Perhaps not coincidentally, his diaspora supporters have been widely maligned by Western media as fascist enablers. Still, even critical mainstream reports admit they and the domestic population have legitimate grievances, due to Romania’s crushing economic decline in the same period.

Bucharest would clearly stop at nothing to ensure the ‘correct’ candidate prevailed in the first round. Removal demands were plentiful, and on the rare occasions that legal justification was provided, it was based on a “very broad interpretation” of the election authority’s power. For example, TikTok was ordered to remove content that was “‘disrespectful and insults the PSD party’” – a left-wing political faction that was part of the country’s ruling coalition at the time. TikTok twice sought further details of the grounds for this request, but none was forthcoming.

Once Georgescu prevailed, and before the election was annulled, Romanian orders became even more aggressive. Regulators told TikTok that “all materials containing Calin Georgescu images must be removed,” again without any legal basis whatsoever. This proved a step too far for the platform, which refused to remove the posts. It wasn’t just naked political pressure to which TikTok refused to bend. Brussels and Bucharest were assisted first in electoral fraud, then autocratic annulment of the vote’s legitimate result, by local EU-sponsored NGOs.

These were organizations “empowered by the European Commission to make priority censorship requests – either as [EU Digital Service Act] Trusted Flaggers or through the Commission’s Rapid Response System.” Despite their supposed neutrality, the NGOs “made politically biased content removal demands.” For example, the EU-funded Bulgarian-Romanian Observatory of Digital Media “sent TikTok spreadsheets containing hundreds of censorship requests in the days after the first round of the initial election.” The committee characterized much of the flagged content as “pro-Georgescu and anti-progressive political speech.”

This included posts related to “Georgescu’s positions on environmental issues and Romania’s membership in the Schengen Area, and the EU’s system of open borders.” In other words, this was content espousing standard, popular conservative viewpoints, which are absolute anathema to Brussels and Bucharest’s pro-EU elite. Since the committee’s report was released, references to the Bulgarian-Romanian Observatory of Digital Media’s EU financing have been deleted from its website.

After the vote

The day after the election was annulled, TikTok wrote to the European Commission, stating plainly it had not found or been presented with evidence of a coordinated network of accounts promoting Georgescu. Undeterred by TikTok’s denials and scarcely bothered by the lack of material evidence, the European Commission pressed forward and demanded information about TikTok’s political content moderation practices and enquired about “changes” to its “processes, controls, and systems for the monitoring and detection of any systemic risks.”

The European Commission also used the “still-unproven narrative” of Russian meddling “to pressure TikTok to engage in more aggressive political censorship.” In response, the platform informed the commission that it would censor content featuring the terms “coup” and “war” – clear references to the perception that democratic processes had been undermined in Romania – “for the next 60 days to mitigate the risk of harmful narratives.” But this was still insufficient for the censorship-crazed commission.

On December 17, 2004, the European Commission opened a formal investigation into TikTok over a “a suspected breach of the DSA” – in other words, failing to sufficiently censor content before and after the first round of Romania’s presidential election. The platform was accused of failing to uphold its “obligation to properly assess and mitigate systemic risks linked to election integrity” locally. EU efforts to bring the platform to heel didn’t end there, either.

In February 2025, TikTok’s product team was summoned for a meeting with the EU’s Directorate-General for Communications Networks, Content and Technology. There, they were lectured over the platform’s supposedly “deceptive behavior policies and enforcement” and “potential[ly] ineffective” DSA “mitigation” measures. The US House Judiciary Committee found that the European Commission’s decision to meet TikTok’s product team, “rather than the government affairs and compliance staff whose job it was to manage TikTok’s relationship with the Commission, indicates the European Commission sought deeper influence over the platform’s internal moderation processes.”

Georgescu and the many Romanians who wished to elect him president were punished even more severely. Two weeks after TikTok was threatened by the European Commission, the upstart hopeful was arrested in Bucharest en route to registering to run in the new election that May. Georgescu was charged with “incitement to actions against the constitutional order.” Since then, he has been accused by authorities of plotting a coup and involvement in a million-euro fraud.

When Georgescu’s case finally reached trial this February, these accusations were dropped. He is instead charged with peddling “far-right propaganda.” A report on his prosecution from English-language news website Romania Insider repeated the fiction he owed his first-round victory to a “targeted social media campaign,” managed by “entities linked to Russia.” In the meantime, establishment-preferred candidate Nicusor Dan won the presidency. No doubt satisfied with the integrity of the democratic process given Georgescu was barred from participating, Romania’s Constitutional Court quickly validated the result.

Beyond Romania

Per the US House Judiciary Committee, Romania’s stolen 2024 presidential election is the most extreme example of the EU and member state authorities conspiring to subvert democracy and trample on popular will. But it is just one of many. Since the Digital Services Act came into force in August 2023, the European Commission has pressured platforms to censor content ahead of national elections in Slovakia, the Netherlands, France, Moldova, and Ireland, as well as the EU elections in June 2024.

“In all of these cases… documents demonstrate a clear bias toward censoring conservative and populist parties,” the committee concluded. Ahead of the EU elections, TikTok was pressured into censoring over 45,000 pieces of purported “misinformation.” This included what the report deemed “clear political speech” on topics such as migration, climate change, security and defense, and LGBTQ rights. There is no indication Brussels has been deterred from its quest to prevent the ‘wrong’ candidates being elected to office in member states, or citizens expressing dissenting opinions.

In fact, we can expect these efforts to ramp up significantly. For one, the US committee’s bombshell report generated almost no mainstream interest, indicating Brussels can and will get away with it again. Even more urgently, in April, Hungary goes to the polls. Already, the narrative that ruling conservative Viktor Orban intends to rig the vote to secure victory is being widely perpetuated. And the EU’s censorship apparatus stands ready to validate that narrative, regardless of truth, and popular will.

Hawaii bills would allow gov’t to quarantine people, enter property without permission, seize firearms, and suspend laws

HB 2236 and SB 2151 make the governor the “sole judge” of an emergency, allow sweeping powers based on a perceived threat alone.

By Jon Fleetwood | February 18, 2026

The Hawaii Legislature is advancing companion legislation that would formally codify sweeping emergency powers for the governor and county officials—including authority to quarantine individuals, enter private property without consent, suspend laws, and seize control of infrastructure—under the justification of preparing for future disasters and disease outbreaks.

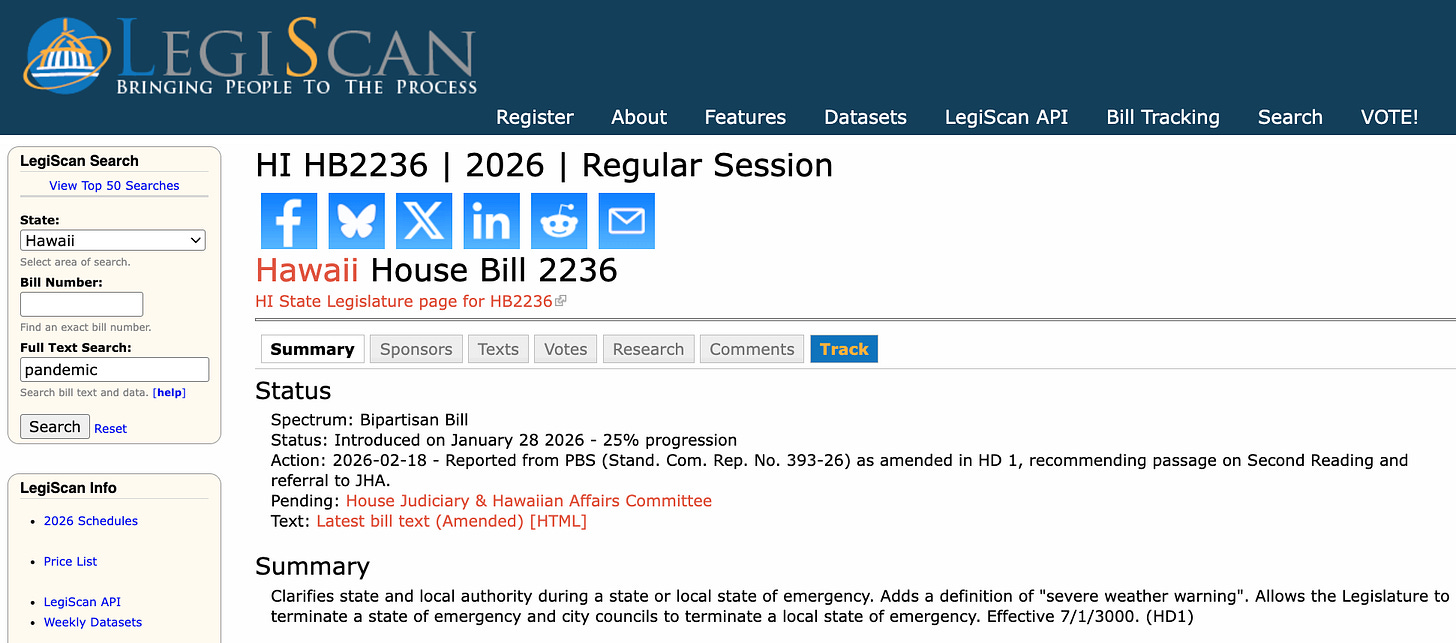

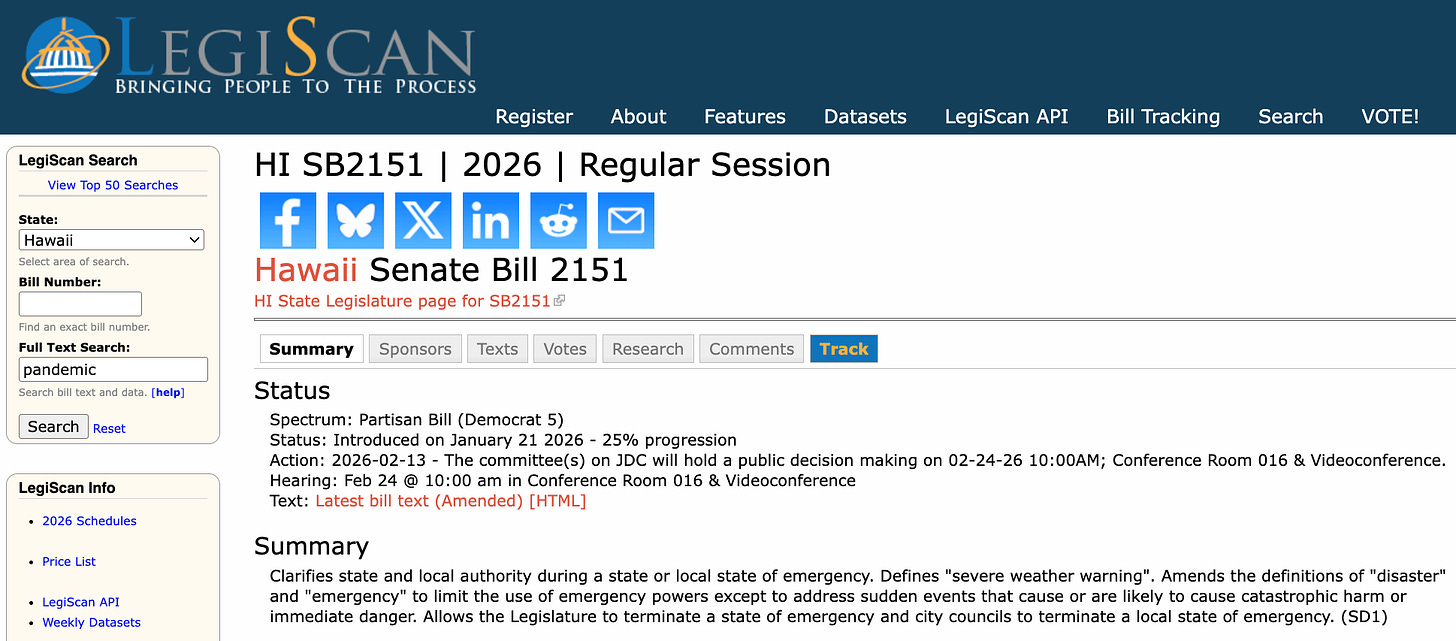

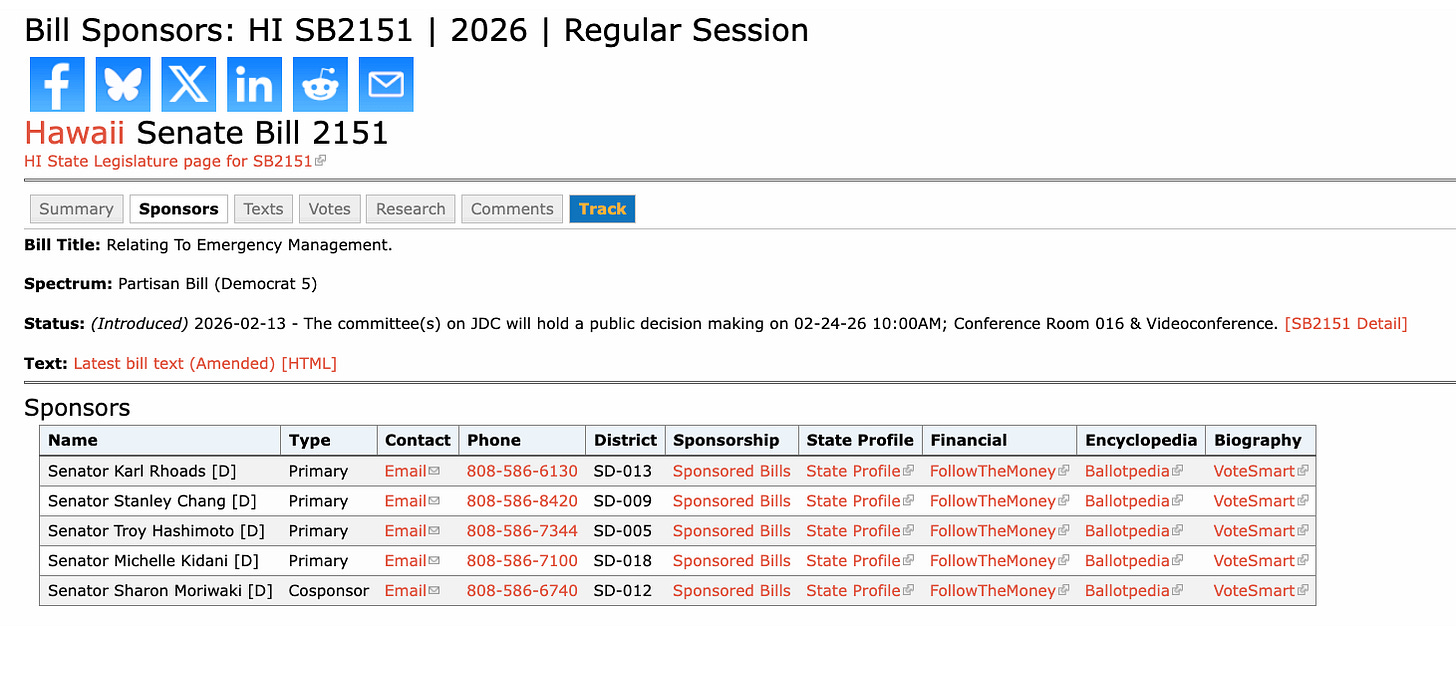

House Bill 2236 and Senate Bill 2151, both titled “Relating to Emergency Management,” were introduced in January and February 2026 and are now moving forward through both chambers.

Legislative records show the bills are formally linked, with each designated as “Same As/Similar To” the other, confirming that Hawaii’s full legislature—not just one chamber—is advancing the emergency powers framework.

The legislation explicitly cites COVID-19 as justification for strengthening emergency authority, stating:

“The COVID-19 pandemic highlights the importance of clear legal frameworks for state and county emergency management to ensure that the State and counties are ready for any type of emergency.”

You can see which state legislators are backing these bills further down in this article.

Governor Authorized to Quarantine Residents & Enter Private Property Without Permission

Governor Authorized to Quarantine Residents & Enter Private Property Without Permission

One of the most consequential provisions would formally authorize forced quarantine and government entry onto private property.

The bill states that Hawaii Governor Josh Green (D) may:

“Require the quarantine or segregation of persons who are affected with or believed to have been exposed to any infectious, communicable, or other disease…”

It further grants authority to:

“Authorize without the permission of the owners or occupants, entry on private premises for any of these purposes.”

This authority applies not only to confirmed infections but also to individuals merely “believed to have been exposed.”

The legislation also allows the government to order the destruction of property deemed hazardous:

“Authorize that public nuisances be summarily abated and, if need be, that the property be destroyed by any police officer or authorized person.”

Governor Can Suspend Laws, Licensing Requirements, & Regulatory Protections

The bills explicitly empower the governor to suspend existing laws during an emergency, including medical, licensing, and regulatory protections.

The legislation states the governor may:

“[Suspend] the laws, in whole or in part… including licensing laws, quarantine laws, and laws relating to labels, grades, and standards.”

It also authorizes suspension of any law deemed to impede emergency operations:

“Suspend any law that impedes or tends to impede… emergency functions.”

Crucially, the legislation allows such suspensions to continue beyond the official emergency period:

“Any suspension of law… may continue beyond the emergency period…”

Government Authorized to Take Control of Private Infrastructure & Utilities

The legislation further empowers the governor to assume control of critical infrastructure, including privately owned facilities.

The bill states the governor may:

“Assure the continuity of service by critical infrastructure facilities, both publicly and privately owned… by taking over and operating the same.”

Additional provisions allow the government to:

- Shut off utilities

- Control distribution of goods

- Regulate or prohibit commerce

- Impose rationing

Specifically, the governor may:

“Regulate or prohibit… the storage, transportation, use, possession, maintenance, furnishing, sale, or distribution thereof, and any business or any transaction related thereto.”

Authority to Regulate Firearms & Seize Property

The legislation also grants authority to regulate firearms and confiscate property during emergencies.

It authorizes the governor to prohibit firearm possession during emergencies, meaning firearms that are normally legal could become unlawful to possess under emergency orders and subject to seizure.

The bill states the governor may:

“Regulate or prohibit the storage, transportation, use, possession… of firearms, and ammunition… and authorize the seizure and forfeiture.”

Governor Retains Sole Authority to Declare Emergencies

Under the proposed framework, Governor Green retains broad discretion to declare emergencies, including based on perceived threats.

The bill states:

“The governor… shall be the sole judge of the existence of the danger, threat, or circumstances giving rise to a declaration.”

Emergencies may be declared based on:

“Imminent danger or threat of an emergency or a disaster.”

This allows activation of emergency powers before an actual disaster occurs.

Legislature Adds New Definition of Disaster Including Disease Outbreaks & Bioterrorism

The Senate version expands the legal definition of “disaster” to explicitly include:

“Disease or contagion outbreaks, bioterrorism, terrorism, or incidents involving weapons of mass destruction.”

This codifies infectious disease emergencies as triggers for the expanded powers.

The move comes as President Donald Trump and Congress have already committed $5.5 billion toward preparing for a future influenza pandemic, while the World Health Organization vows such a pandemic is inevitable, U.S. scientists continue gain-of-function influenza experiments, and the administration launches its $500 million Operation Gold Standard influenza vaccine initiative.

Legislature Advances Bills Through Both Chambers

Legislative tracking records show both bills are progressing simultaneously:

- HB2236 was introduced January 28, 2026, and has already passed committee review in the House.

- SB2151 was introduced January 21, 2026, and is scheduled for further committee action February 24, 2026.

The bills are formally cross-linked, confirming coordinated legislative advancement.

Legislature Frames Bills as Clarification of Emergency Authority

Lawmakers describe the purpose of the legislation as clarifying and strengthening emergency management authority.

The bill states its purpose is to:

“Clarify state and county emergency management authority, ensure effective and adaptable emergency responses…”

The measures also allow the legislature to terminate emergency declarations by a two-thirds vote.

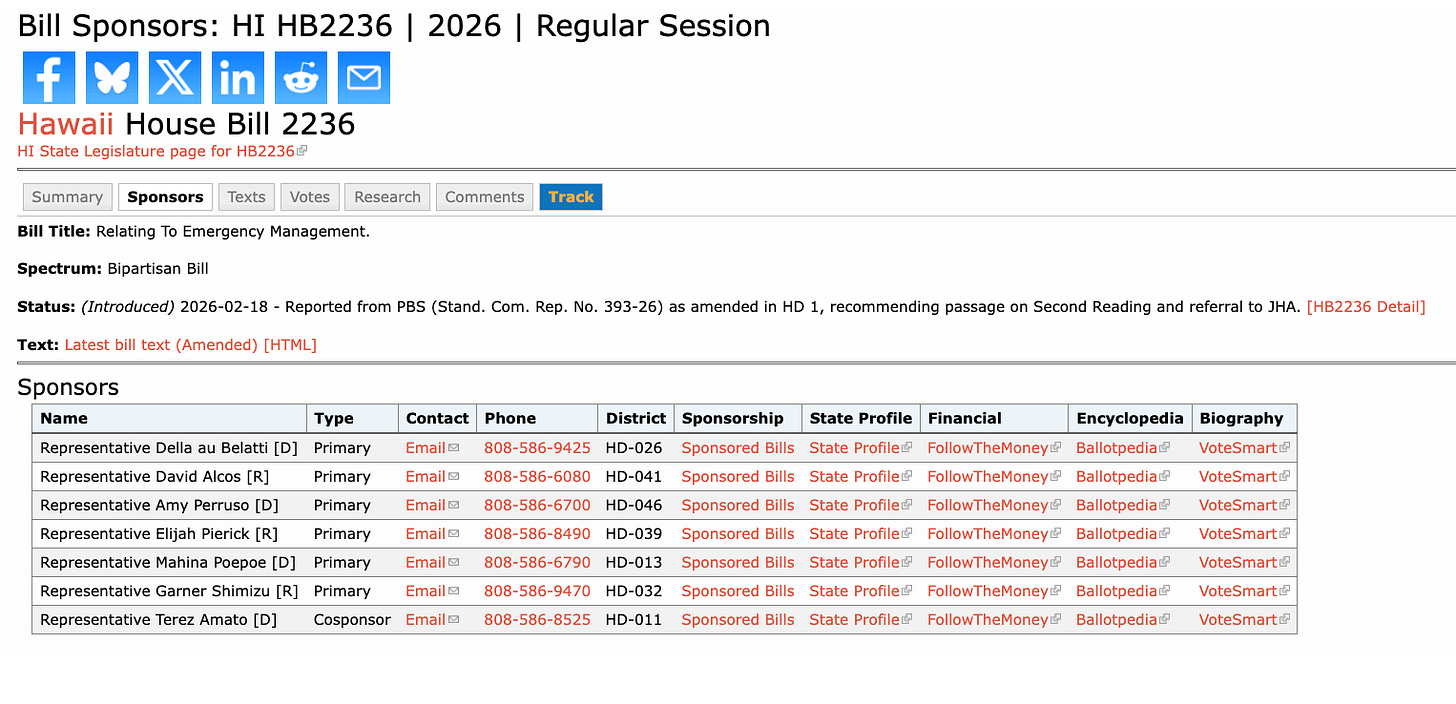

Which Legislators Are Backing the Bills

You can see which Representatives are backing HB2236 here.

You can see which Senators are backing SB2151 here.

Bottom Line

HB2236 and SB2151 would lock into permanent Hawaii law the authority to quarantine residents based on suspected exposure, enter private property without permission, suspend existing laws, prohibit firearm possession under emergency orders, and take control of private infrastructure and economic activity—all under an emergency declaration the governor has broad discretion to issue, including based on a perceived “threat.”

The legislation is advancing as the federal government pours billions into influenza pandemic programs, conducts gain-of-function experiments designed to alter influenza viruses, and builds out large-scale vaccine deployment initiatives intended for rapid rollout once a pandemic is declared.

At the same time, Congress, the White House, the Department of Energy, the FBI, the CIA, and Germany’s Federal Intelligence Service (BND) have confirmed that the COVID-19 pandemic was likely the result of lab-engineered pathogen manipulation.

That overlap creates a profound conflict-of-interest question: the same government and scientific establishment involved in creating and manipulating pandemic-capable pathogens is also expanding the legal authority to impose quarantines, override constitutional protections, restrict property rights, and control economic life if one of those pathogens triggers the next declared emergency.

If passed, Hawaii’s bills would ensure those powers are not improvised in the moment, but already written into law—allowing sweeping restrictions on residents to be activated immediately, the moment the next pandemic or declared threat emerges.

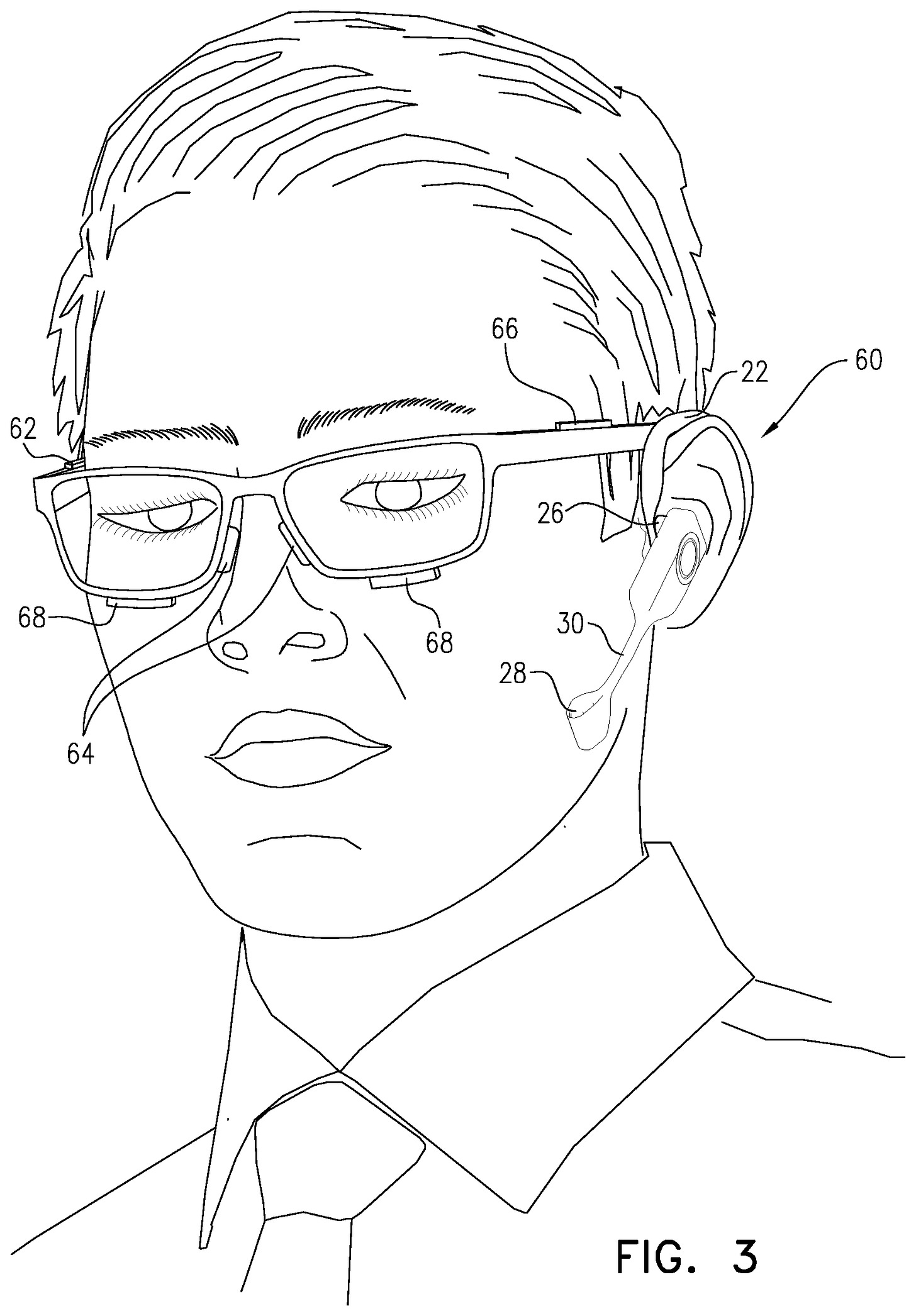

UK Government Plans to Use Delegated Powers to Undermine Encryption and Expand Online Surveillance

Delegated powers mean the specific rules (what gets scanned, what gets flagged) get written by a minister, not Parliament.

By Ken Macon | Reclaim The Net | February 18, 2026

The UK government wants to scan people’s photos before they send them. Not just children’s photos. Everyone’s.

Technology Secretary Liz Kendall spelled it out on BBC Breakfast, floating a proposal to “block photographs being sent that are potentially nude photographs by anybody or block children from sending those.” That second clause is the tell. Blocking “anybody” from sending potentially nude images requires scanning everybody’s messages. There’s no technical path to that outcome that doesn’t involve reading content the sender assumed was private.

Kendall said the government is conducting a consultation on “whether we should have age limits on things like live streaming” and whether there should be “age limits on what’s called stranger pairing, for example, on games online.” The consultation, she said, will look at all of these. That list now covers messaging apps, photo sharing, gaming, and live streaming. Any feature that lets you share an image with another person potentially falls inside it.

This is how the mandate grows. The government announced a push for new delegated powers on February 16, framing them around age verification for social media and VPNs.

What Kendall described in broadcast interviews goes well beyond that framing. The official press release mentioned consulting on how companies might “safeguard children from sending or receiving” nude images. Kendall’s BBC comments dropped the qualifier about children entirely, proposing to block “potentially nude” images sent by anyone.

The mechanism matters here. The government plans to introduce these new authorities as amendments to the Children’s Wellbeing and Schools Bill, which has already cleared the House of Commons and the House of Lords and sits in its final stage. Amendments introduced this late receive less parliamentary scrutiny than standard legislation.

Delegated powers allow a minister or department to issue secondary legislation without returning to Parliament for a full vote. That secondary legislation isn’t subject to the same debate as the original Act. The government gets to decide the specific rules, on its own timeline, with limited opportunity for challenge. Kendall told Good Morning Britain that the government plans to push new “online safety rules” every year through this mechanism.

The bill already contains amendments requiring age verification for VPNs (amendment 92) and for “user-to-user” services (amendment 94a). User-to-user covers most online platforms where people share content: social media, messaging apps, forums, and gaming services. Email and SMS are exempt. Most everything else isn’t.

A charitable reading of why the government wants delegated powers: it needs flexibility to update technical standards for age verification as the technology changes, and only if Parliament first approves the underlying requirements. The less charitable reading, and the more plausible one: the government wants the ability to impose VPN and social media age verification even if those amendments fail. It’s building a back door to bypass the outcome of the parliamentary vote it’s currently trying to win.

The House of Lords previously considered and rejected an amendment that would have required constant client-side scanning on most smartphones and tablets to detect child sexual abuse material. The Lords declined to adopt it. That rejection happened through the full parliamentary process.

The government is now signaling it may pursue functionally identical surveillance through delegated powers, bypassing the scrutiny that killed the first attempt. Kendall’s photo-scanning proposal and the failed Lords amendment work the same way technically. Both require software installed on your device to examine content before it leaves. The Lords’ amendment targeted CSAM via client-side scanning. Kendall’s proposal targets “potentially nude” images via client-side scanning. The mechanism is identical. The content category is different.

End-to-end encryption means the service provider can’t read your messages. Client-side scanning, which has already proven to be a disaster in Germany, means your device reads them first, before encryption activates, and reports back. The encryption remains technically intact. The privacy it’s supposed to provide doesn’t. This is the same architecture that Apple proposed and then abandoned in 2021 after security researchers explained what it actually meant for private communication.

The government hasn’t acknowledged that its photo-scanning proposal requires dismantling the privacy guarantee that makes encrypted messaging meaningful. It’s describing the outcome it wants, not the infrastructure required to deliver it.

Photo scanning that flags “potentially nude” images requires training a model to identify what nudity looks like, running that model continuously on a device, and reporting matches somewhere. The system built for that purpose can be retrained or repurposed. A scanner that identifies nudity can be adjusted to flag political content, protest coordination, or anything else a future government decides warrants detection.

The delegated powers structure means those future decisions don’t require new primary legislation. They require a minister, a statutory instrument, and limited parliamentary review.

Prime Minister Keir Starmer’s February 16 Substack noted that “private chats” are supposedly harming children without proposing to target them specifically. The official press release didn’t mention messaging apps at all. What Kendall said on television this week went further than either document. The consultation hasn’t launched yet. The powers to act on its findings, at speed, with reduced oversight, are already being written into law.

The U.S. Sanctions Cuban Journalist For Reporting On The U.S. Blockade

The Dissident | February 17, 2026

The U.S. has recently cut off Cuba’s source of oil from Venezuela and Mexico, with the intention, as Trump recently admitted , of creating a “humanitarian threat” in hopes it will lead to regime change, boasting that because of the blockade, “There’s no oil. There’s no money. There’s no anything.”

As Cuban-based journalist Marc Frank reported , due to the blockade, “Prices are soaring, power outages are increasing, and gas lines are growing. Public and private transportation are disappearing. Produce at markets is dwindling, and all but emergency surgeries have been canceled. The fear that the quality of life will quickly deteriorate is palpable”.

The U.S. is now taking this a step further and placing targeted sanctions on Cuban journalists doing critical reporting on the blockade.

A Miami-based pro-regime change outlet called CiberCuba reports that the U.S. has “imposed visa restrictions” on Cuban journalist Pedro Jorge Velázquez, known as El Necio, accusing him of “involvement in harassment campaigns against American diplomats in Cuba”.

In response, El Necio wrote , “I am an ordinary young Cuban. Five years ago, I began doing my work through social media and collaborating with press outlets. I have no employment ties whatsoever to the Cuban government: currently, I do not work in press media or state institutions.”

He noted that the accusation of “harassment” is in reference to his “ latest journalistic investigation” where he uncovered, “ the purchase of fuel (gasoline) by US diplomats in Havana: the very same fuel that they block from Cuba, only to consume it themselves afterward.”

He noted that while the “sanction is irrelevant to me” noting that, “I have never had, nor have I ever requested, a visa to enter the US” he added that, “we do need to denounce this serious violation of press freedom” adding, “this is not a personal attack, but a precedent for censorship and coercion against every young Cuban who speaks out against the blockade on Cuba or who practices journalism that does not please the Trump administration.”

The U.S. sanctions against El Necio for reporting on the U.S. blockade on Cuba mirror U.S. sanctions on Francesca Albanese, the UN’s special rapporteur for Palestine, in retribution for a report she published exposing U.S. corporations’ complicity in the Gaza genocide.

Similarly, to justify the sanctions, the U.S. accused Albanese of “writing threatening letters to dozens of entities worldwide, including major American companies across finance, technology, defense, energy, and hospitality”, in reference to her writing letters to companies fueling the genocide in Gaza, informing them of their violation of international law and participation in war crimes.

The sanctions also mirror the EU sanctions placed on the former Swiss army colonel Jacques Baud, in retribution for his criticism of the proxy war in Ukraine.

From Cuba to Palestine to Ukraine, sanctions are more often being used as a tool to silence and intimidate those exposing and critiquing Western foreign policy.

Israeli firms transform cars into intelligence devices: Reports

Al Mayadeen | February 17, 2026

Modern vehicles have evolved into internet-connected digital ecosystems, a transformation that is reshaping the global intelligence market, with “Israel” paying special attention to this rising domain, according to a new investigation by Haaretz.

In intelligence circles, information harvested from vehicles is known as “CARINT,” short for car intelligence. Today’s vehicles function as “computers on wheels,” equipped with built-in SIM cards, GPS systems, Bluetooth connectivity, and multimedia platforms that continuously transmit data.

The report reveals that at least three Israeli companies are operating in this expanding sector, developing tools that enable government clients to track vehicle movements in real time, cross-reference vast databases, and identify specific targets among thousands of cars on the road.

Industry sources cited in the investigation described the use of AI-powered “data fusion” systems that combine vehicle telemetry, roadside camera feeds, advertising data, and cellular metadata to construct comprehensive intelligence profiles. Rather than directly hacking a device, agencies are increasingly assembling what sources describe as a surveillance mosaic from legally or commercially available data streams.

The case of Toka

Among the companies identified is Toka, co-founded by former Prime Minister Ehud Barak and former Israeli military cyber chief Yaron Rosen.

According to documents and industry sources cited by Haaretz, Toka developed a product capable of infiltrating a vehicle’s multimedia system, pinpointing its location, and remotely activating microphones or dashboard cameras. The system was reportedly approved by “Israel’s” Security Ministry for presentation and eventual export.

The company said that as part of its 2026 product roadmap, it no longer sells the hacking tool.

Experts noted that exploiting vehicle vulnerabilities remains technically complex, as each manufacturer employs distinct digital architectures. However, the possibility of remote access to in-car microphones and cameras has raised acute privacy and security concerns.

Another Israeli firm, Rayzone, has reportedly begun selling vehicle-tracking tools through its subsidiary TA9. Unlike offensive hacking products, Rayzone’s system focuses on aggregating and cross-referencing data, including SIM-card tracking, Bluetooth signals, and license-plate recognition feeds.

The investigation suggests that the intelligence industry is gradually shifting away from high-profile phone-hacking technologies associated with firms such as NSO Group and toward large-scale, AI-enabled data analytics platforms.

In the United States, companies such as Palantir Technologies analyze license plate databases and vehicle registries, integrating them into broader intelligence systems. Israeli firm Cellebrite also works extensively with US law enforcement agencies in extracting and processing digital evidence, including vehicle-related data.

Vehicle intelligence expanded post Oct. 7

The Haaretz investigation further highlights that in the aftermath of Operation al-Aqsa Flood, Israeli authorities, with support from the private sector, developed advanced capabilities to locate vehicles stolen from army bases and border communities. According to the report, these tools were later integrated into military systems.

The article also points to China’s longstanding regulatory framework requiring domestic car manufacturers to transmit vehicle data to state authorities. It further notes that the Israeli Occupation Forces imposed restrictions on certain Chinese electric vehicles entering military facilities, citing security concerns.

Security analysts warn that the accelerating digitization of vehicles not only expands surveillance capabilities but also increases cybersecurity risks. Ethical hackers have previously demonstrated, in controlled environments, the ability to manipulate steering systems or disable engines remotely. Industry sources cited in the investigation indicate that some government clients are increasingly expressing interest in remote vehicle-disabling technologies.

At global intelligence exhibitions such as ISS World, often referred to as the “Wiretapper’s Ball”, artificial intelligence and real-time data fusion dominate discussions. AI systems now enable the rapid processing of millions of disparate data points, including vehicle telemetry, audio streams, and video feeds, transforming them into actionable intelligence with unprecedented speed.

Industry insiders argue that as vehicles become more connected, they will inevitably play a more central role in intelligence gathering. Privacy advocates, however, caution that the same connectivity that enhances consumer convenience may also underpin a powerful and potentially intrusive surveillance infrastructure.

The Haaretz investigation concludes that while directly hacking individual vehicles remains technically complex, AI-driven aggregation of vehicle-generated data could make such intrusions increasingly unnecessary, raising significant questions about privacy, regulation, and the future of digital mobility.

Palantir, Dataminr help build Gaza AI-Driven digital prison system

A +972 Magazine investigation reveals that US firms Palantir and Dataminr are embedded in the US-Israeli post-war plan for Gaza through the Civil-Military Coordination Center (CMCC), a US-run hub coordinating Trump’s 20-point plan. A Palantir “Maven Field Service Representative” tied to Project Maven has been assigned to the center, integrating battlefield AI into Gaza’s future control structure.

Project Maven fuses satellite imagery, drone feeds, intercepted communications, and metadata into an AI platform described as “optimizing the kill chain.” Rights groups argue these AI-enabled systems have accelerated the genocide in Gaza, scaling up killings with minimal human oversight. UN figures show nearly 70% of verified fatalities are women and children, with entire families wiped out in strikes allegedly guided by AI systems.

Palantir has expanded cooperation with Israeli occupation forces since 2024, doubling its Tel Aviv presence and supporting war-related missions. Amnesty International lists the company among firms whose services helped facilitate genocide and starvation in Gaza. Dataminr, specializing in real-time social media surveillance, has also been integrated into the framework, feeding AI-driven threat intelligence into the evolving security architecture.

Under the so-called “Alternative Safe Communities” model, Palestinians would be forcibly relocated into fenced, heavily monitored compounds under US-Israeli control. Within these zones, AI systems would track phones, movements, and online activity, flagging individuals as “security risks,” effectively turning Gaza into an AI-driven digital prison and kill-list system.

This architecture has been compared to Nazi concentration camps in its logic of isolating, surveilling, and managing an entire population as a security threat, reducing civilians to data points under total algorithmic control.

Zionist-controlled companies to surveil British citizens

Press TV – February 17, 2026

The implications of the British state using technology produced by Zionist-controlled companies to surveil British citizens are beyond belief.

The cornerstone of a sovereign nation is the absolute control over its own justice, its own data, and its own watchmen. Yet today, the very machinery of British law enforcement is being quietly and systemically outsourced.

The British government has allowed the digital and physical infrastructure of the state to become a high tech extension of a foreign power, driven by the pernicious influence of Zionism, an ideology that prioritizes the expansion of a foreign entity over the rights of people in the UK.

This is not merely a matter of procurement. It is a surrender of independence.

By embedding Zionist-linked firms into the heartbeat of British society, the government is importing a surveillance philosophy rooted in the subjugation of one people and applying it to their own subjects.

These are combat-proven technologies forged in the fires of the Gaza genocide, and they are now the primary eyes and ears of the metropolitan police.

The police use Israeli intelligence firm Cellebrite to unlock the phones and private lives of their own citizens. They also use BriefCam to track people’s movements through video synopsis.

BriefCam is a company co-founded by Gideon Ben-Zvi, a veteran of the IOF elite unit 8200 Intelligence Corps, who openly admits to using unit 8200 criteria to lead his ventures.

The reach of foreign intelligence into the streets is even more direct through Corsight AI, which provides facial recognition throughout the country.

Born as a subsidiary of Cortica, it was founded by Igal Raichelgauz, another alumnus of the Zionist military intelligence apparatus.

When our faces are scanned by software overseen by the architects of the occupation of Palestine, can we truly say that the British public is being policed by British consent?

But the intrusion goes deeper than software. It reaches the very hands of our officers on the front lines.

ISPRA, an Israeli specialist in riot control, has historically supplied the crowd management munitions used to police the streets.

When the tools used to suppress dissent in the UK are manufactured by a firm specializing in the containment of occupied territories, the line between domestic policing and foreign military occupation begins to blur.

Furthermore, Motorola Solutions, a company listed by the United Nations for its links to illegal settlements, is now deep inside our research projects.

Through initiatives like CREST and Connections, they’re building predictive policing tools designed to monitor the social media content and online lives of the British public.

When a company that facilitates surveillance in the West Bank is the same one mapping the future crimes of Londoners, we have fundamentally compromised our domestic integrity.

Links between Zionist movement and Lionel Idan

Lionel Idan is a key British prosecutor serving as the Chief Crown Prosecutor for the CPS and also the National Hate Crime Lead Prosecutor.

He’s currently being heavily lobbied by a network of powerful Zionist groups.

We’re not just talking about casual meetings.

Idan has held repeated engagements with the Israeli embassy and Zionist lobby groups, the board of Deputies of British Jews and the Community Security Trust, CSD, an organization headed by convicted fraudster Gerald Ronson.

The objective is clear, to ensure the Crown Prosecution Service, CPS, fully adapts the IHRA definition of anti-semitism, a definition weaponized against anti-Zionists, as we saw during the attacks on Jeremy Corbyn and the Labour Party.

Lionel Idan has not hidden these alliances. In an op-ed for the Jewish News, he boasted that the CPS sits on the anti-semitism Working Group alongside the CSD and the Jewish leadership council.

He confirmed that lobby groups, the CSD and the Antisemitism Policy Trust, are now core members of the CPS External Consultative Group on Hate Crime.

Perhaps most concerning is that the national prosecution guidance is being shaped by these very groups. Idan has admitted that their involvement helps the CPS define the line where anti-Zionism becomes a criminal offense.

When the person overseeing London’s prosecutions attends Israel lobby annual dinners to celebrate new security task forces, where is the independence of the UK legal system?

It should be demanded that the CPS remain an impartial body free from the influence of political lobbyists and foreign interests.

THE CHILDREN GAMBIT

How Europe’s Political Class Weaponises Innocence — and Has Been Building This Machine for Years

Islander Reports | February 17, 2026

Before we start. These platforms aren’t innocent. They’ve extracted billions from our attention, manipulated our children’s dopamine cycles, censored truth tellers, handed our data to surveillance capitalism and slept soundly every night. Hold that. And then read what follows anyway — because what’s happening right now is something else entirely.

Let’s start with the money. Because the money never lies.

€1.2 billion. Ireland’s Data Protection Commission. Meta. May 2023. The largest GDPR fine in history, for routing EU citizen data to the United States without adequate protection. A record that lasted about five minutes.

€530 million. TikTok. May 2025. Same Irish authority. For sending European user data to China and then, this is the part they buried in the press release — lying about it during the inquiry. TikTok told regulators throughout the investigation it wasn’t storing EEA data on Chinese servers. In February 2025, they quietly admitted it had been. All along.

€345 million. TikTok again. 2023. Children’s data. €14.5 million from the UK’s Information Commissioner’s Office on top of that, same year, same issue. €91 million to Meta Ireland in September 2024 — they stored hundreds of millions of user passwords in plaintext. Just sitting there. No encryption. Exposed. €390 million to Meta the year before, for forcing users to accept personalised advertising as a condition of accessing their own accounts.

And then December 5th, 2025. The European Commission handed X — formerly Twitter, now Elon Musk’s megaphone and the primary target of every European leader who’s discovered that their citizens can organise against them online — a €120 million fine. First ever penalty under the Digital Services Act. For misleading users about the blue verification badge, concealing advertiser identities, and blocking government-approved researchers from accessing algorithmic data.

Over €2.5 billion. Just the verdicts. Just the ones that made it to conclusion. Fourteen active DSA proceedings still grinding through the machinery, with Meta and TikTok each facing potential fines of 6% of global revenue. That’s €9.9 billion for Meta. €9.3 billion for ByteDance. Numbers large enough to restructure companies. Numbers designed to make platforms obedient.

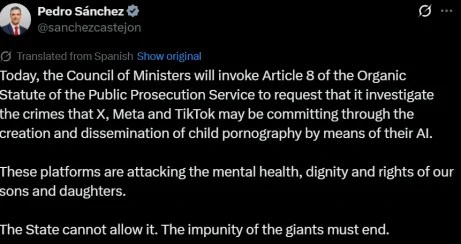

So when Pedro Sanchez walked out this morning and announced that Spain’s Council of Ministers would invoke Article 8 of the Organic Statute of the Public Prosecution Service — sic prosecutors onto X, Meta and TikTok for “crimes they may be committing” through AI-generated child pornography — understand what you’re looking at.

This isn’t a regulator at the end of its rope. This is a political class that has already built the machine, tested the machine, extracted billions through the machine — and is now deciding what else the machine can reach.

“May Be Committing”

That’s the phrase. Not “has committed.” Not “is committing.” May be. Sanchez posted it on X — the very platform he’s threatening to prosecute — and the media swallowed it whole, no questions about evidence or methodology or whether a public prosecutor’s office is the right instrument for making technical judgements about AI image generation pipelines.

The Spanish government claims Grok produced three million sexualised images in eleven days, including over 23,000 involving minors. Strong numbers. Specific numbers. Precise to the point of being designed to prevent challenge — because you can’t interrogate evidence you haven’t been shown, and asking to see it means you’re defending the indefensible. Not one published source. Not one independent methodology. They arrived complete, ready-made for outrage.

That’s the genius of it. The children gambit works precisely because you cannot question it without becoming the villain of the story.

Pavel Durov said it plainly — and look, nobody should hold Durov up as a civic virtue. But he’s spent years watching governments use platform regulation as a control mechanism, and when he says Sanchez’s moves aren’t safeguards but steps toward total control, he’s speaking from operational experience. He’s seen this architecture before. From the inside.

Here’s what this moment actually is, in the longer register. Every time a Western liberal government needs to consolidate control over the information environment, it finds a victim group whose protection cannot be questioned. In the 20th century they used communists, terrorists, drug dealers. The 21st century discovered something more powerful — children. Unimpeachable. Unchallengeable. A shield so morally absolute that any surveillance infrastructure built behind it arrives pre-legitimised. Sanchez didn’t invent this playbook. He’s just the current page.

Here’s the question nobody in any press conference asked today. If you actually wanted to protect children from AI-generated abuse material — if that were the genuine, singular, burning priority — what would you do?

You’d hunt the producers. Fund specialist cyber units with the resources and legal powers to identify, locate and prosecute the people who generate and distribute child sexual abuse material. Build better reporting pipelines so victims and witnesses have direct, fast routes to enforcement. Nail the distribution networks — the forums, the channels, the file-sharing infrastructure where this material moves — with targeted operations and international cooperation. Invest in takedown technology that works at scale. These are the unglamorous tools of actual child protection. Forensic. Technical. Expensive. Slow. Not suited to a press conference.

None of that is what Sanchez announced today. What he announced was prosecution of three of the most visible American technology platforms, with unverified statistics, under a legal mechanism designed for emergency government intervention in the public interest — on the same morning Keir Starmer in London announced restrictions on the last tool of genuine online privacy.

That’s not child protection. That’s the political class treating every ordinary user as a pre-suspect, building infrastructure that watches everyone in order to catch a tiny minority — and using the minority as the justification.

When someone says “think of the children,” look at what they’re actually building. Because what they’re building right now, across Europe and Britain, is an internet where you need permission to speak.

The Network They Actually Protected

Let’s be precise about who’s invoking children to demand your identity.

Jeffrey Epstein ran an international child trafficking operation for decades. Not speculation. Court and DOJ documents. Thirty-five girls identified by Palm Beach police in 2005. FBI reports going back to 1996. Federal prosecutors in Florida prepared a 60-count draft indictment in 2007 — conspiracy, sex trafficking of minors, enticement — charging Epstein and three co-conspirators described as employees who “persuaded, induced, and enticed individuals who had not attained the age of 18 years to engage in prostitution.”

The names of those three co-conspirators were in the indictment. Then US Attorney Alexander Acosta gave Epstein 13 months in county jail with work release six days a week and immunity for “any potential co-conspirators” — in direct violation of federal victims’ rights law. The investigation was shut down. Epstein walked. The network persisted.

Fast forward. January 2026. Department of Justice releases 3 million pages (a mere 2% of what they have in possession) under a law Congress passed unanimously demanding transparency. Victims’ names exposed. Driver’s licenses published. Witness statements naming perpetrators? Redacted. Draft indictment naming co-conspirators? Still redacted. Attorneys for over 200 victims called it “the single most egregious violation of victim privacy in one day in United States history” and accused DOJ of “hiding the names of perpetrators while exposing survivors.”

Congressmen like Thomas Massie had to read names aloud on the House floor before DOJ would release them. Rep. Ro Khanna: “The survivor statements to the FBI naming rich and powerful men who went to Epstein’s island, his ranch, his home — who raped and abused underage girls — they were all hidden.”

Now look at who’s demanding you hand over your identity to speak online.

Keir Starmer — the man proposing VPN bans and bypassing Parliament to regulate your thumbs on a screen — appointed Peter Mandelson as UK Ambassador to the United States in December 2024. Mandelson called himself Epstein’s “best pal” in Epstein’s 50th birthday book. Their friendship continued after Epstein’s 2008 conviction. Emails released in the January 2026 DOJ files show Mandelson received £75,000 in payments from Epstein between 2003-2004, leaked classified government information to him while serving as Business Secretary in 2009-2010, and sent messages suggesting Epstein was wrongfully convicted.

Starmer knew about the Epstein connection when he made the appointment. Mandelson had already resigned from government twice before — conflicts of interest, financial misconduct — and the Epstein relationship was public record. Starmer appointed him anyway. Made him Britain’s top diplomat. Gave him the US ambassador post. When the files dropped and the depth of the relationship became undeniable, Starmer’s chief of staff Morgan McSweeney — who recommended Mandelson — resigned. Then Starmer’s communications director. Then his cabinet secretary. Three senior aides gone in days.

Mandelson is now under criminal investigation by the Metropolitan Police for misconduct in public office. US Congress has requested he submit to interview as part of its investigation into Epstein’s co-conspirators and enablers.

And Starmer — whose government just had VPN downloads surge 1,800% because British citizens don’t trust him with their browsing data — is the man now lecturing the public about online child safety.

This isn’t hypocrisy. It’s consistency. The same political class that gave Epstein’s network immunity and protected co-conspirators for two decades is now demanding total visibility over your identity. The same Department of Justice that hid perpetrators and exposed survivors is the one telling you encryption backdoors are necessary to protect children. The same institutions that shut down the Epstein investigation in 2008 and buried the names in 2026 are building the Digital Identity Wallet, the fact-checker networks, the 24-hour removal mandates.

When they say this is about protecting children, look at the Epstein files. Look at who they protected. Look at who they prosecuted. Look at who they gave immunity. Look at whose names are still redacted while survivors’ information gets published.

Then ask yourself why these exact same people need to know who you are before you’re allowed to speak.

What This Actually Is — Unelected, Unaccountable, and Expanding

Here’s what nobody in the mainstream coverage will say: the regulatory apparatus now targeting these platforms was not built by people you voted for.

Picture what happens when a flag arrives. It’s 2am. A compliance officer at a major platform — a 26-year-old in Dublin or Amsterdam with a policy degree and a quota — opens an alert. A Brussels-appointed body has flagged a post as potentially harmful. The DSA gives the platform 24 hours to act or face fines of up to 6% of global revenue. There’s no named accuser. No court order. No adversarial process. Just a designation, a deadline, and a number so large that hesitation is financially irrational. The post gets removed. The writer wakes up to find their words gone. The politician whose opponents wrote it points elsewhere. The regulator points at the law. The compliance officer points at the process.

Nobody elected any of them.

The European Commission is not elected. Its commissioners are appointed by governments, approved by a parliament most Europeans couldn’t name the composition of — and its enforcement apparatus, the officials running fourteen DSA proceedings and handing out nine-figure fines, operates at a distance from democratic accountability that is not incidental but structural. The “trusted flaggers” embedded in the DSA framework, deputised to mark content for priority removal, are appointed bodies. Ofcom in the UK is a regulator, not an elected chamber. The European Board for Digital Services, coordinating enforcement across 27 countries, answers to no electorate anywhere on earth.

Sanchez and Starmer announce the intention. The technocrats execute it. And when it goes wrong — when the journalist’s article vanishes into a compliance process with no appeal, when the civil servant’s flagging of “migrant hotel” videos turns out to be political interference dressed as child protection — there is no one to vote out. The politician points at the regulator. The regulator points at the law. The law was written in workshops whose attendees you’ll never know. Democratic majorities change. Regulatory architecture doesn’t.

That’s not a flaw in the system. It’s the system working exactly as it was designed.

Britain and the VPN — The Moment the Mask Slipped

The week before Sanchez made his announcement, Keir Starmer was in London saying “no platform gets a free pass.” New powers to restrict social media. AI chatbots brought under the Online Safety Act. Infinite scrolling — the physical act of moving your thumb down a screen — to be regulated. Action in “months, not years.” And crucially, explicitly, openly: bypassing the parliamentary scrutiny that would normally apply to legislation this significant. He said it out loud. The urgency is too great for debate.

But the detail that should stop every person who cares about liberty cold is the VPN proposal.

Let’s be clear about what a VPN actually is, because the political class is clearly hoping you don’t know and don’t care to find out.

A Virtual Private Network encrypts your internet connection and masks your IP address — your digital location, the identifying tag that follows you across every website you visit, that your internet service provider logs, that governments can and do compel ISPs to hand over. When you use a VPN, your traffic passes through an encrypted tunnel. Your ISP sees that you’re connected to a VPN server. That’s it. They cannot see where you go. They cannot see what you say. They cannot read your communications.

This is the tool that domestic abuse survivors use to hide their location from abusers. That investigative journalists use to protect their sources. That activists use to organise without government surveillance. VPNs aren’t a loophole. They’re a lifeline.

After the UK Online Safety Act came into force, VPN downloads in Britain surged by 1,800%. Half the top ten apps in British app stores became VPN services. Ordinary British citizens — not criminals, not paedophiles, not terrorists — reached for the exact same tool that people under authoritarian regimes use to avoid state surveillance, because they didn’t want to submit government-verified identity just to browse normally.

Starmer’s response to that 1,800% signal was to propose restricting VPNs.

Not to reconsider whether the surveillance infrastructure was too invasive. Not to ask why a free people felt the need for anonymity tools in a democracy. No — the tool of privacy is the problem. The loophole to be closed.

And here’s the thing that proves this was never about children. Ban commercial VPNs tomorrow and any determined teenager circumvents it within hours — cheap cloud servers, open proxies, custom tunnels for less than a dollar a month. The only people genuinely impacted are the ones relying on them for legitimate safety: the abuse survivor hiding their location, the journalist protecting a source, the person who simply doesn’t want their ISP building a commercial profile of their private reading habits. A VPN ban doesn’t protect children. It closes the last gap in the surveillance infrastructure — means that when the DSA triggers an investigation into your political commentary, when the Brussels-appointed fact-checker flags your article, there’s nowhere left to go. No tunnel. No private space. Just a 1984 dystopian, digitally enhanced.

The Wallet Nobody’s Talking About

Beneath all of this — quieter, slower, more permanent than any headline — is the piece of architecture that makes everything else irrelevant to debate once it’s in place.

By December 2026, every EU member state is legally required to provide its citizens with a European Digital Identity Wallet. Not a proposal. Law — Regulation EU 2024/1183, in force since May 2024. Major platforms will be required to accept it as a login mechanism. The private sector — banks, retailers, online services, social media — can request verified identity information through it.

Brussels will tell you the privacy protections are robust. And it’s worth taking that position seriously, because it isn’t entirely dishonest.

Article 5a of the regulation is real. It states explicitly that relying parties — the companies and platforms using the wallet — “shall not refuse the use of pseudonyms, where the identification of the user is not required by Union or national law.” The Commission points to this as the safeguard. They have a point. It’s in the law. It’s binding. If you want to use your wallet pseudonymously on a platform that has no legal requirement to know who you are, the regulation says you can. Proponents argue this is a meaningful, enforceable right — and that critics conflating the wallet with mandatory real-name requirements are misreading the text.

The problem is the eleven words the Commission would prefer you not to dwell on: where the identification of the user is not required by Union or national law.

That clause means the pseudonymity right exists only in the space where no law has yet required your identity. It is protection that any member state can legislate away, for any service, with a single national law and a stated reason. Child protection. Anti-terrorism. Financial crime. Age verification. The reasons are not hard to find. The EU has no override mechanism — Brussels cannot prevent a member state from passing a law that, in its domestic application, triggers the exception and requires identification. So the right survives only until a government decides it shouldn’t. One parliament. One vote. The pseudonymity is gone for that service, in that country — legally, permanently, with the full blessing of the regulation’s own text.

And there’s something else the Commission won’t volunteer. The architecture meant to enforce the pseudonymity right — the mechanism that would actually prevent platforms from demanding your identity when they have no legal right to — was quietly gutted in implementation. Privacy advocates at epicenter.works, the only civil society organisation that worked on this file throughout the entire reform process, found that the Commission made relying party registration certificates optional rather than mandatory. Without mandatory certificates, the wallet cannot verify whether a company’s request for your real identity is legitimate or overreaching. Tech giants can demand identification in contexts that don’t legally require it. There is no technical mechanism to stop them. The safeguard exists in the legislation. The infrastructure that would make the safeguard real was made optional in the implementing regulations.

The Commission was told this directly. They proceeded anyway.

Civil society organisations warned EU officials in an open letter that the wallet “may eliminate anonymity, leading to over-identification and a loss of privacy.” Unacknowledged. One hundred and thirteen free speech and privacy experts wrote separately to raise similar concerns about the broader regulatory framework. Ignored. The pattern of constructing the infrastructure first and addressing rights concerns later — or not at all — is not a run of oversight failures. It’s a consistent set of choices made by people who understood exactly what they were choosing.

The Machine Is Already Running

People keep framing this as something that might happen. Future concerns. Hypothetical overreach.

It’s not the future.

The European Democracy Shield is operational — fifty action points, a European Centre for Democratic Resilience, a state-funded network of fact-checkers on Brussels money with a Brussels mandate, described in their own documents as “rapid response capacity” for information “crises.” The Commission decides what a crisis is. There is no external appeal. Just a bureaucrat with a mandate to act within 24 hours and a definition of disinformation so broad that it extends, in the Commission’s own telling, to content “that is not illegal.”

How broad? In May 2025, the Commission hosted a closed-door workshop with platform compliance teams. Training exercises. Internal documents. The US House Judiciary Committee obtained these documents under subpoena — you can disagree with the committee’s politics but you can’t argue with what the documents actually show. One exercise asked participants how to handle a post: an image of a teenage Muslim girl in a hijab alongside the text “we need to take back our country.” The exercise classified the combination as “illegal hate speech” requiring removal. Now, a reasonable person might argue about that specific scenario. Fine. Argue it. But the fact that this is the level at which European regulators are working — training platform compliance teams to remove common political sentiment combined with religious imagery, in closed-door workshops, before any court has ruled, before any democratic debate has happened — tells you something important about where the definitions are pointing.

Think about what that means in practice. Not in theory — in practice. A compliance officer at a platform with 400 million users gets a flag from a Brussels-funded body. The post contains a political opinion combined with an image. The body has designated it harmful. The platform has 24 hours. The alternative is a fine that could be measured in billions. Nobody phones a judge. Nobody consults the person who wrote it. The post disappears. And when it does — when that specific combination of political sentiment and religious imagery gets quietly removed from 400 million people’s feeds at 2am by someone following a process designed in a workshop that was closed to the public — that isn’t a transparency obligation. That’s the state deciding what the public is allowed to see. And doing it with plausible deniability built in at every layer.

That fact-checker network plugs directly into DSA enforcement. Platforms — X, Meta, TikTok, and by mid-2026 almost certainly ChatGPT, which already has three times the user numbers needed to trigger Very Large Online Platform designation — will be legally required to act on those findings. Not consider them. Act. Within 24 hours. Or face fines of 6% of global revenue.

The €120 million fine X received in December 2025 wasn’t for hosting child abuse content. It was for opacity — for not giving government-approved researchers access to the recommendation algorithm that determines what information reaches citizens. The Commission called it a transparency obligation. What it actually was: the state asserting the right to see inside the machine that shapes what the public thinks, so it can instruct the machine to shape it differently.

And when the Digital Identity Wallet closes the last gap — when the pseudonymity is quietly legislated away by a member state with a “reason,” when the VPN tunnel gets restricted, when every platform knows exactly who is saying what with a government-verified name attached — the system is complete. Everyone who speaks online, identified. Everything said, attributable. Every flag by a Brussels-appointed body, actionable within a day.

All of it constructed, piece by deliberate piece, in the name of protecting children from harm.

Final thoughts

The Soviet Union had a name for the officials who ran its censorship apparatus. Guardians of the public good. They had fact-checkers — called editors, party reviewers, information officers. Rapid response systems. Legal frameworks for acting on speech that threatened the stability of the state. Most of them genuinely believed they were protecting something real. That’s what makes these systems so durable — the people inside them are sincere.

They didn’t think of themselves as censors either.

What you are watching, from Madrid to London to Brussels, is the construction of a digital order in which the ability to speak freely, anonymously, without state knowledge, is being dismantled — not through jackboots but through frameworks, directives, DSA workshops, government-funded fact-checker networks, and the entirely reasonable-sounding proposition that we must protect our children.

Sánchez is a man whose government has been at war with X since the platform gave his opponents a direct line to Spanish voters that bypassed media institutions his party spent years cultivating. Starmer is a man whose government monitored social media during a domestic political crisis and then moved to expand its legal authority over the very platforms that let citizens talk about what they saw. The European Commission is a body of unelected officials who trained platform compliance teams, in closed-door workshops, to remove political sentiment they’d categorised as harmful — and then ignored 113 experts who wrote to warn them what they were building.

Keir Starmer is a man who appointed an Epstein associate as his personal envoy to Washington, knowing the relationship, knowing the history, and when it collapsed appointed himself the guardian of online child safety

These. Are. The self appointed guardians of the children.

They gave Epstein’s co-conspirators immunity and are still hiding their names two decades later. But they need to know yours before you can post a political opinion. They protected a trafficking network with clients in the highest levels of Western power. But you’re the threat that requires a Digital Identity Wallet. They redacted the men who procured children for a convicted paedophile while publishing the victims’ driver’s licenses. But your VPN is the problem that demands legislative action.

Call that what it is.

They didn’t prosecute the network because they were the network’s best customers. So how dare they invoke children’s safety to strip yours.

€2.5 billion extracted. Fourteen proceedings active. A Digital ID mandate rolling out across 27 countries by year’s end. VPNs under legislative attack in the birthplace of the Magna Carta. Parliamentary scrutiny openly bypassed in London. A Democracy Shield with a rapid response protocol for information crises that no one elected anyone to define.

They’ve been building this for ten years. The fines, the frameworks, the wallets, the fact-checkers, the VPN bans, the bypassed parliaments. Layer by layer. Always with a reason. Always with a child somewhere in the justification.

They’re nearly done.

And when it’s finished — when the wallet is in your pocket, the fact-checkers are wired to the platforms, the pseudonymity has been legislated away in some member state that needed a “reason,” the last encrypted tunnel closed — they will stand in front of all of it and tell you it was always, only, ever about the children.

An internet where you need permission to speak isn’t a safer internet. It’s a controlled one.

Epstein’s co-conspirators walk free while you need state permission to call them what they are.

Believe them if you want. History will know what it was.

A note on comment posting at Alethonews

Many readers may be aware of the fact that the ADL has been using AI to locate targets for libel suits.

Alethonews archives have been methodically scoured by AI.

At this time all comments have been removed and no future posts will have comments allowed.

Macron, Merz, and von der Leyen Defend Expanded Speech Controls

The Munich Security Conference just became a defense session for Europe’s most ambitious censorship regime

By Dan Frieth | Reclaim The Net | February 16, 2026

Emmanuel Macron stood before the Munich Security Conference last week and offered a blueprint for what European governments should be allowed to delete from the internet. The French president wants mandatory identity verification for social media users, one account per person, algorithm transparency on the government’s terms, and the legal authority to block platforms that refuse to comply.

“We have to be sure there is one single person with one account,” Macron said. “If this is an AI system, if this is bot or organized by big organization, it should be just forbidden.”

The statement describes a system where every social media user would have their identity verified by platforms and tied to a single permitted account. Anonymous speech, pseudonymous commentary, and the ability to maintain separate personal and professional presences online would effectively end for anyone using platforms that serve the European market.

Macron suggested this as a way to protect democracy. The mechanism would give governments a powerful tool to identify, track, and silence any user whose speech they find objectionable.

France is moving to ban social media access for anyone under 15, a policy that requires verifying every user. Macron defended this by characterizing free expression online as a form of brainwashing.

“Free speech would mean I will give the mind, brand the heart of my teenagers to algorithm of big guys,” he said. “I’m not totally sure I share the values, or Chinese algorithm without any control. We are crazy.”

The argument runs as follows: letting young people encounter ideas online without government permission is insanity. The solution requires every user to prove their age to access platforms where public discussion happens.

Macron suggested that speech illegal in newspapers should remain illegal when moved online. “How is that the craziest and most harmful narratives can go unchecked in our digital space, where they will fall under the law if published in print?”

The question assumes “harmful narratives” is a category the government should define. It also assumes the government should have the power to prevent people from encountering ideas it has labeled crazy.

Macron invoked the Digital Services Act as the foundation for expanded censorship across Europe. “This is a very important regulation because for the first time we created the framework to regulate this platform.”

The DSA gives EU regulators the authority to demand content removal from platforms. Macron called for going further: using the law to “excuse those who clearly decide not to respect our rules and our regulation” and to “block all those [who allow] interferences in our systems.”

He offered a familiar list of speech categories he wants suppressed: “racist speech, hateful speech, anti-Semitic speech.” These terms have no fixed legal definition that applies uniformly across EU member states. Who is racist, what constitutes hatred, which criticism of which policies counts as anti-Semitism: these determinations would be made by regulators and platforms operating under government pressure.

Macron described limits on speech as somehow inherent to democracy itself: “When you have free speech, you have respect, you have rules, and the limit of my freedom is the beginning of your freedom.”

This formulation treats speech as equivalent to physical coercion. Your words are framed as a boundary violation against others simply by existing. The speech that most requires protection is speech that offends, that challenges consensus, that the powerful would prefer to suppress. Macron’s framework offers no protection for any of it.

German Chancellor Friedrich Merz, who opened the conference, echoed the European position that speech protections should end where government-defined values begin.

“A divide has opened up between Europe and the United States,” Merz said. “And Vice President JD Vance said this very openly here at the Munich Security Conference a year ago, and he was right. The battle of cultures of MAGA in the US is not ours. Freedom of speech here ends where the words spoken are directed against human dignity and our basic law.”

“Human dignity” is the phrase German law uses to justify prosecuting speech. The Constitutional Court has interpreted it to cover insults, Holocaust denial, and an expanding category of expression that authorities determine undermines respect for persons or groups. It is the legal mechanism under which German police have raided homes over social media posts and prosecuted people for memes.

European Commission President Ursula von der Leyen joined the censorship chorus with a declaration of territorial authority over online expression.

“I want to be very clear: our digital sovereignty is our digital sovereignty,” she said, adding the EU “will not flinch where this is concerned.”

Von der Leyen described European speech regulation as under attack from the United States, “which has wielded the threats of tariffs on partners to secure preferential access and has decried the EU’s digital rules as an assault on free speech.”

The EU’s digital rules are an assault on free speech. The DSA empowers bureaucrats to demand platforms remove content, under threat of massive fines.

The EU has opened formal proceedings against X for its policies. European regulators have forced platforms to suppress content that would be legally protected in the United States.

Von der Leyen framed resistance to this regime as a threat to Europe’s “democratic foundation.” She claimed Europe has “a long tradition in freedom of speech” while defending a legal structure designed to ensure certain speech never reaches European audiences.

“The European way of life – our democratic foundation and the trust of our citizens – is being challenged in new ways,” she said. “On everything from territories to tariffs or tech regulations.”

The phrasing groups speech regulation with tariffs and territorial disputes. All three are matters where Europe will defend its sovereignty. What Europeans are permitted to say, read, and share online is treated as equivalent to where national borders fall.

The leaders who gathered in Munich spoke of protecting democracy while proposing tools that would let governments identify and punish dissent. They invoked free speech while demanding the power to decide which speech is free. They claimed to defend Europe while stripping Europeans of the ability to speak freely online.

Keir Starmer-tied think tank paid PR firm to target The Grayzone

By Kit Klarenberg | The Grayzone | February 16, 2026

Leaked files have revealed that Labour Together, the shadowy think tank run by disgraced former top Keir Starmer aide Morgan McSweeney, paid the Washington DC-based corporate intelligence firm APCO Worldwide to spy on journalists who reported on their corrupt handling of campaign finances.

The reporters named appear to have been targeted for their efforts to investigate how the UK’s Labour Party elites spent 730,000 pounds in undeclared donations to install Starmer as their leader.

The files show APCO used those funds to oversee the fabrication of a dodgy, evidence-free dossier claiming that Russia was behind damaging disclosures about Labour Together, which it submitted to the National Cyber Security Centre (NCSC) of Britain’s GCHQ — London’s equivalent to the US National Security Agency.

The “significant persons of interest” listed in APCO’s McCarthyite casebook included The Grayzone and myself.

According to my APCO dossier, “While a self described ‘investigative journalist,’ he is an author for the Gray Zone. The site has been described as a ‘conspiracy blog’ and ‘Wagner propaganda channel.’ In 2023,” the dossier reads, I “was arrested by counter-terror police after [I] arrived in the UK.”

APCO bills itself as “a trusted and strategic advisor… that drive[s] our clients’ missions and objectives forward.” Despite its massive contract with Labour Together, the files show the PR firm struggled to identify its targets, and proved unable to establish the most basic facts about them.

When APCO branded The Grayzone as “Wagner propaganda,” it seemed to have confused us with “Grey Zone,” an entirely unrelated and now-defunct Telegram channel affiliated with the Russian military contractor. APCO also claimed I was “arrested by counter-terrorism police” in May 2023 upon returning to Britain. In fact, I had been detained, not arrested.

APCO also targeted journalists Matt Taibbi and Paul Holden, who led investigations into Labour Together’s potentially criminal activities, based on leaks and Freedom of Information requests. The PR firm had sought to secure “leverage” over Holden in order to sabotage his work.